Machines can transform cybersecurity as we know it

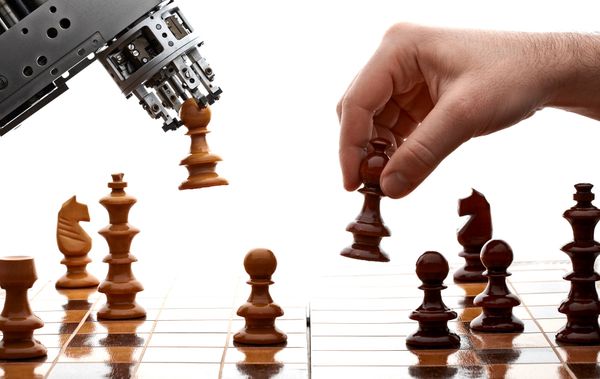

In the world”s first machine-against-machine battle, bug-hunting bots proved they can find and exploit intricate software vulnerabilities with remarkable speed.

DARPA”s Cyber Grand Challenge put seven machines to find, patch, and exploit security holes in seven supercomputers.

The teams behind the bots loaded their autonomous systems onto the seven supercomputers. At the event, these launched software filled with some of the toughest security holes. The vulnerabilities were cleverly modeled on historical flaws like the Heartbleed bug (discovered in 2014), the bug exploited by the SQL Slammer worm (2003), and the Crackaddr bug (also 2003).

The bots were expected to attack, but also to defend themselves by deciding whether patching their own security flaws was a smart move. As known, patching slows down the impacted software as well as other services running on the machine.

Mayhem, one of the competing bots, decided to skip applying the fixes. It weighed the costs and the benefits of updating and the likelihood that another bot would actually exploit its holes before deciding whether the effort made sense or not.

One particular machine stood out as it managed to crack the utterly complicated Crackaddr bug – Mechaphish, Yan Shoshitaishvili”s creation.

“This type of exploit is really, really difficult”, said David Brumley, a Carnegie Mellon computer scientist overseeing the contest.

Unlike Mayhem, Mechaphish patched every hole it found, focusing on “subtle and complex” bugs. However, Mayhem”s strategy worked better and the bot won the contest in the 96th round, despite several mysterious phases of inactivity.

Great expectations?

The machines were incredibly fast in detecting even the smallest flaws, yet automation isn”t expected to replace humans in cyber-defense anytime soon.

Blurring the line between man and machine, artificial intelligence is a great cyber-weapon, but can”t handle the burden of fighting cyber threats alone”, writes Cristina Vatamanu, machine learning expert at Bitdefender. “Machine learning systems may yield false positives and a human”s decision is needed to retrain those algorithms with proper data.”

In a world where malware creators outnumber the good guys, robots can prove extremely efficient in dealing with massive volumes of files, while humans deal with difficult decisions.

“We won”t go in one fell swoop to fully automated network defense”, says DARPA”s Arati Prabhakar. “But think about how powerful it will be for humans to leverage these kinds of machine tools. When humans start being able to do things they”ve never thought of before. That”s when it gets really interesting.”

tags

Author

Alexandra started writing about IT at the dawn of the decade - when an iPad was an eye-injury patch, we were minus Google+ and we all had Jobs.

View all postsRight now Top posts

Start Cyber Resilience and Don’t Be an April Fool This Spring and Beyond

April 01, 2024

Spam trends of the week: Cybercrooks phish for QuickBooks, American Express and banking accounts

November 28, 2023

FOLLOW US ON SOCIAL MEDIA

You might also like

Bookmarks